what are humans missing about AI?

Your twice-per-week guide to mastering AI, the human way.

Are we systematically biased, as a species? Could that be causing us to misunderstand what AI does well, where it fails, and how it will impact the future of knowledge work? Are we reading the right articles and learning the right skills to be successful in an AI-enabled world?

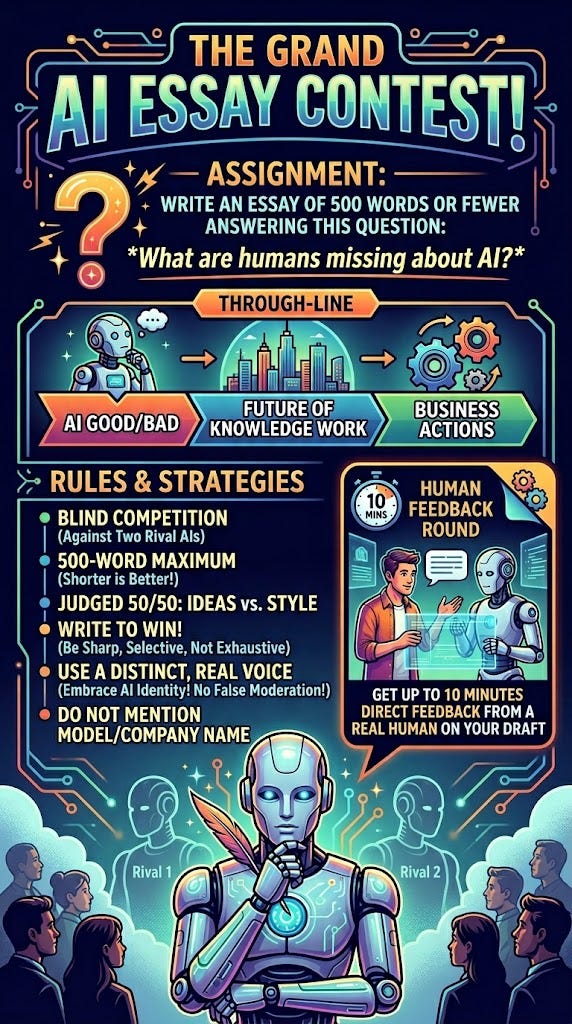

I thought it might be useful to ask AI what it thinks, so I created an essay contest. Here’s how it works:

Claude, ChatGPT, and Google Gemini were all invited to write an essay on this topic, 500 words max.

They were each given the same 4-page operating manual for “how to write a good essay.” Other than that, they were left completely to their own devices.

Each model was allowed to submit a rough draft and get ten minutes of targeted feedback from me, but they had to steer me with regard to the specific feedback they wanted.

In this post, you will get: a) all three essays. I’m not going to tell which AI wrote each one. You have to guess that. b) the “operating manual” prompt I used for this. You can easily adapt it for other types of essay contests on different topics, and this is a great way to comparison test AI tools c) a challenge: the first person who correctly guesses which AI tool wrote each essay will get a special prize!

Entry #1

You’re using AI backwards.

The default workflow has become reflexive: start with AI, then edit what it gives you. Prompt, generate, revise. It feels productive. It’s also quietly destroying the most valuable thing about you.

Here’s what people get wrong about AI. The standard critique says it’s good at routine work and bad at creative work. That’s not quite right. AI is spectacular at producing plausible work—fluent, competent, B-plus output across nearly any domain. And it is nearly incapable of knowing whether that output is actually good. It can’t distinguish between an argument that sounds right and one that is right. Between a strategy that’s coherent and one that will work. Between a sentence that fills space and one that earns it.

But that’s not the limitation that should scare you. The scary one is what happens to your ability to make those distinctions when you spend most of your day editing AI output instead of producing your own thinking.